0xCBE

Now

•

100%

0xCBE

Now

•

100%

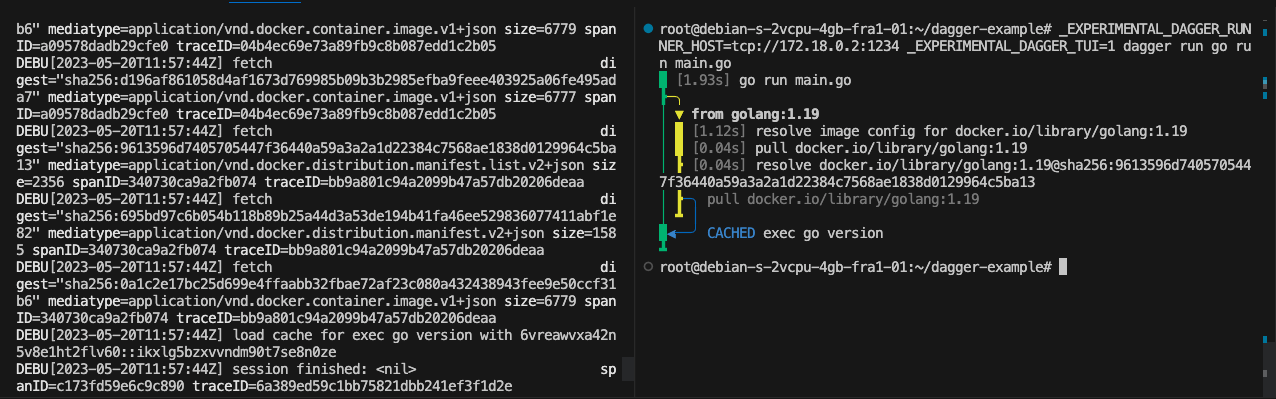

You build a derivation yourself... which I never do. I am on mac so I brew install and orchestrate brew from home manager. I find it works good as a compromise.

github.com

github.com

cross-posted from: https://infosec.pub/post/397812 > Automated Audit Log Forensic Analysis (ALFA) for Google Workspace is a tool to acquire all Google Workspace audit logs and perform automated forensic analysis on the audit logs using statistics and the MITRE ATT&CK Cloud Framework. > > By [Greg Charitonos](https://www.linkedin.com/in/charitonos/) and [BertJanCyber](https://twitter.com/BertJanCyber)

www.chainguard.dev

www.chainguard.dev

>We’ve made a few changes to the way we host and distribute our Images over the last year to increase security, give ourselves more control over the distribution, and most importantly to keep our costs under control [...]

>This first post in a 9-part series on Kubernetes Security basics focuses on DevOps culture, container-related threats and how to enable the integration of security into the heart of DevOps.

0xCBE

Now

•

100%

0xCBE

Now

•

100%

nice! I didn’t know this plant. I’ll try to find some.

0xCBE

Now

•

100%

0xCBE

Now

•

100%

it’s impressive! How does your infrastructure looks like? Is it 100% on prem?

0xCBE

Now

•

100%

0xCBE

Now

•

100%

I like basil. At some point I i got tired of killing all the plants and started learning how to properly grow and care greens with basil.

It has plenty of uses and it requires the right amount of care, not too simple not too complex.

I’ve grown it from seeds, cuttings, in pots, outside and in hydroponics.

www.forbes.com

www.forbes.com

Not really technical, but gives some pointers to wrap your head around the problem

www.theregister.com

www.theregister.com

"Toyota said it had no evidence the data had been misused, and that it discovered the misconfigured cloud system while performing a wider investigation of Toyota Connected Corporation's (TC) cloud systems. TC was also the site of two previous Toyota cloud security failures: one identified in September 2022, and another in mid-May of 2023. As was the case with the previous two cloud exposures, this latest misconfiguration was only discovered years after the fact. T**oyota admitted in this instance that records for around 260,000 domestic Japanese service incidents had been exposed to the web since 2015**. The data lately exposed was innocuous if you believe Toyota – just vehicle device IDs and some map data update files were included. "

airisk.io

airisk.io

"database [...] specifically designed for organizations that rely on AI for their operations, providing them with a comprehensive and up-to-date overview of the risks and vulnerabilities associated with publicly available models."

0xCBE

Now

•

100%

0xCBE

Now

•

100%

nice instance!

0xCBE

Now

•

100%

0xCBE

Now

•

100%

This stuff is fascinating to think about.

What if prompt injection is not really solvable? I still see jailbreaks for chatgpt4 from time to time.

Let's say we can't validate and sanitize user input to the LLM, so that also the LLM output is considered untrusted.

In that case security could only sit in front of the connected APIs the LLM is allowed to orchestrate. Would that even scale? How? It feels we will have to reduce the nondeterministic nature of LLM outputs to a deterministic set of allowed possible inputs to the APIs... which is a castration of the whole AI vision?

I am also curious to understand what is the state of the art in protecting from prompt injection, do you have any pointers?

0xCBE

Now

•

100%

0xCBE

Now

•

100%

👋 infra sec blue team lead for a large tech company

Now

Now