Deliverator

Now

•

100%

Deliverator

Now

•

100%

Pave the earth approved picture

Deliverator

Now

•

100%

Deliverator

Now

•

100%

I really like kbins layout/structure, it's like a mix of reddit and twitter and with some optimization could really be something special.

Deliverator

Now

•

100%

Deliverator

Now

•

100%

That old George Carlin bit is more relevant than ever:

https://www.youtube.com/watch?v=FZq6MfGKpQ0

www.youtube.com

www.youtube.com

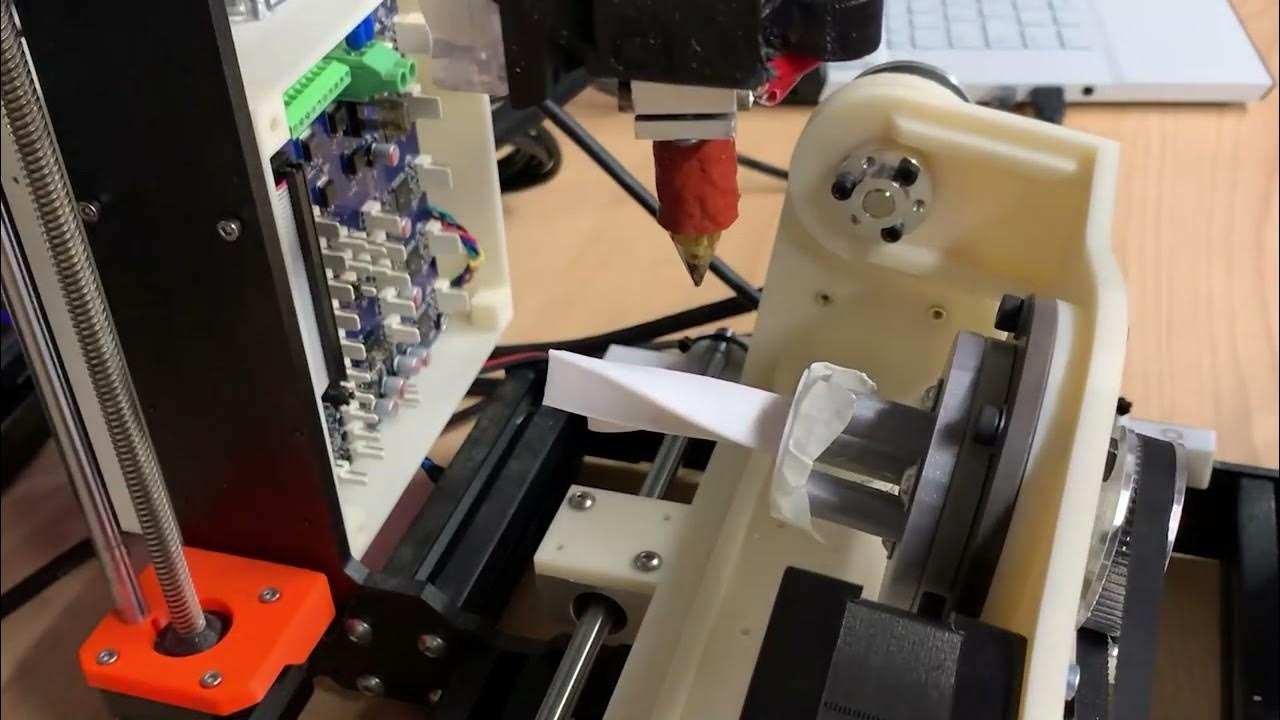

This is 3D printed with converted Prusa i3 using ongoing project called open5xPreprint article can be found in below link:https://arxiv.org/abs/2202.11426Git...

Deliverator

Now

•

100%

Deliverator

Now

•

100%

This is a good example, it's a hydrophone recording of a glass sphere imploding, the level of sound and echo should give you a good idea of the kind of forces we're dealing with:

Deliverator

Now

•

100%

Deliverator

Now

•

100%

I vote the left one or some variant of it

Deliverator

Now

•

100%

Deliverator

Now

•

100%

At first everything about this was infuriating but now I want to see the entire C-suite of Meta and Twitter face off in gladiatorial combat, and we can stream it all on twitch and bet on who will live

Deliverator

Now

•

100%

Deliverator

Now

•

100%

Its that good old American puritanical spirit at work

Deliverator

Now

•

100%

Deliverator

Now

•

100%

"Give Me Convenience or Give Me Death"

As the fediverse continues to grow, let's reflect on some of the things that we disliked most about posting/lurking on reddit and what we can do differently now that we have a chance to build something new.

Deliverator

Now

•

96%

Deliverator

Now

•

96%

It's not a real keygen if there's no chiptune music

Stable Diffusion revolutionised image creation from descriptive text. GPT-2, GPT-3(.5) and GPT-4 demonstrated astonishing performance across a variety of language tasks. ChatGPT introduced such language models to the general public. It is now clear that large language models (LLMs) are here to stay, and will bring about drastic change in the whole ecosystem of online text and images. In this paper we consider what the future might hold. What will happen to GPT-{n} once LLMs contribute much of the language found online? We find that use of model-generated content in training causes irreversible defects in the resulting models, where tails of the original content distribution disappear. We refer to this effect as Model Collapse and show that it can occur in Variational Autoencoders, Gaussian Mixture Models and LLMs. We build theoretical intuition behind the phenomenon and portray its ubiquity amongst all learned generative models. We demonstrate that it has to be taken seriously if we are to sustain the benefits of training from large-scale data scraped from the web. Indeed, the value of data collected about genuine human interactions with systems will be increasingly valuable in the presence of content generated by LLMs in data crawled from the Internet.

Deliverator

Now

•

100%

Deliverator

Now

•

100%

The live page psuedo app is working well for me on grapheneOS with the vanadium browser, I do also get a random 503 error but it's already so much better than a couple days ago

Deliverator

Now

•

100%

Deliverator

Now

•

100%

It would help alot If telecom/internet infrastructure was treated like our other infrastructure. Not to mention the literal billions of dollars in fraud that companies like Verizon and Comcast get away with. I still get mad when I think about how they were given massive sums of money to expand fiber optic infrastructure and gave themselves bonuses instead.

It might be a good idea to have comment replies turned on by default, I feel like it'll help drive discussion/engagement but that's ultimately up to the devs

Deliverator

Now

•

100%

Deliverator

Now

•

100%

Go download Fdroid and go to town, its a great repository of FOSS android apps

Deliverator

Now

•

100%

Deliverator

Now

•

100%

A Scanner Darkly is an incredibly moving and haunting novel to anyone who's ever struggled with drug addiction. For a nonfiction book probably "Kill Anything That Moves" which is about the horrifying and infuriatting reality of the U.S. war in Vietnam, and "The Hot Zone" by Richard Preston

Deliverator

Now

•

100%

Deliverator

Now

•

100%

I use a browser extension (running Librewolf if it matters) which works for entire pages or selected text:

Deliverator

Now

•

100%

Deliverator

Now

•

100%

also check out sister magazines /m/vegetarian and /m/vegan

Deliverator

Now

•

100%

Deliverator

Now

•

100%

Sure, they're going to be an adult company now and turn all of those memes, anime and genital pics into sweet sweet ad money, just like a real megacorp!

Deliverator

Now

•

100%

Deliverator

Now

•

100%

It's really a shame that everything good and valuable in the world has to be boiled down to a fucking dollar sign..

Deliverator

Now

•

100%

Deliverator

Now

•

100%

Here's an archive.org link of the /r/datahoarder post I saw, the downloader tools at the bottom are probably most of interest but still some good links/info there:

Deliverator

Now

•

100%

Deliverator

Now

•

100%

I haven't been on reddit in a few days but before I left I recall seeing someone post a github link that touted something like that for lemmy instances. Haven't found anything for kbin but I know there are tools out there for scraping data/images/etc from different subreddits and users

There's a reason people add site:reddit.com to their google searches, and the top story about how joesmith42069 got 50k karma on their totally dank meme isn't it.

I'm currently getting by with a mixture of Design Spark Mechanical, FreeCAD, and OpenSCAD for prototyping/editing files, I'd love to find a good alternative that isn't from a predatory company like Autodesk

### MOOCs ### Nowadays, there are a couple of really excellent online lectures to get you started. The list is too long to include them all. Every one of the major MOOC sites offers not only one but several good Machine Learning classes, so please check coursera, edX, Udacity yourself to see which ones are interesting to you. However, there are a few that stand out, either because they're very popular or are done by people who are famous for their work in ML. Roughly in order from easiest to hardest, those are: * Andrew Ng's ML-Class at coursera: Focused on application of techniques. Easy to understand, but mathematically very shallow. Good for beginners! [https://www.coursera.org/course/ml](https://www.coursera.org/course/ml) * Hasti/Tibshirani's Statistical Learning [https://lagunita.stanford.edu/courses/HumanitiesSciences/StatLearning/Winter2016/about](https://lagunita.stanford.edu/courses/HumanitiesSciences/StatLearning/Winter2016/about) * Yaser Abu-Mostafa's Learning From Data: Focuses a lot more on theory, but also doable for beginners [https://work.caltech.edu/telecourse.html](https://work.caltech.edu/telecourse.html) * Geoff Hinton's Neural Nets for Machine Learning: As the title says, this is almost exclusively about Neural Networks. [https://www.coursera.org/course/neuralnets](https://www.coursera.org/course/neuralnets) * Hugo Larochelle's Neural Net lectures: Again mostly on Neural Nets, with a focus on Deep Learning [http://www.youtube.com/playlist?list=PL6Xpj9I5qXYEcOhn7TqghAJ6NAPrNmUBH](http://www.youtube.com/playlist?list=PL6Xpj9I5qXYEcOhn7TqghAJ6NAPrNmUBH) * Daphne Koller's Probabilistic Graphical Models Is a very challenging class, but has a lot of good material that few of the other MOOCs here will cover [https://www.coursera.org/course/pgm](https://www.coursera.org/course/pgm) ### Books ### The most often recommended textbooks on general Machine Learning are (in no particular order): * Hasti/Tibshirani/Friedman's Elements of Statistical Learning FREE [http://statweb.stanford.edu/%7Etibs/ElemStatLearn/](http://statweb.stanford.edu/%7Etibs/ElemStatLearn/) * Barber's Bayesian Reasoning and Machine Learning FREE [http://web4.cs.ucl.ac.uk/staff/D.Barber/pmwiki/pmwiki.php?n=Brml.HomePage](http://web4.cs.ucl.ac.uk/staff/D.Barber/pmwiki/pmwiki.php?n=Brml.HomePage) * MacKay's Information Theory, Inference and Learning Algorithms FREE [http://www.inference.phy.cam.ac.uk/itila/book.html](http://www.inference.phy.cam.ac.uk/itila/book.html) * Goodfellow/Bengio/Courville's Deep Learning FREE [http://www.deeplearningbook.org/](http://www.deeplearningbook.org/) * Nielsen's Neural Networks and Deep Learning FREE [http://neuralnetworksanddeeplearning.com/](http://neuralnetworksanddeeplearning.com/) * Graves' Supervised Sequence Labelling with Recurrent Neural Networks FREE [http://www.cs.toronto.edu/%7Egraves/preprint.pdf](http://www.cs.toronto.edu/%7Egraves/preprint.pdf) * Sutton/Barto's Reinforcement Learning: An Introduction; 2nd Edition FREE [https://www.dropbox.com/s/7jl597kllvtm50r/book2015april.pdf](https://www.dropbox.com/s/7jl597kllvtm50r/book2015april.pdf) Note that these books delve deep into math, and might be a bit heavy for complete beginners. If you don't care so much about derivations or how exactly the methods work but would rather just apply them, then the following are good practical intros: * An Introduction to Statistical Learning FREE [http://www-bcf.usc.edu/%7Egareth/ISL/](http://www-bcf.usc.edu/%7Egareth/ISL/) * Probabilistic Programming and Bayesian Methods for Hackers FREE [http://nbviewer.ipython.org/github/CamDavidsonPilon/Probabilistic-Programming-and-Bayesian-Methods-for-Hackers/blob/master/Prologue/Prologue.ipynb](http://nbviewer.ipython.org/github/CamDavidsonPilon/Probabilistic-Programming-and-Bayesian-Methods-for-Hackers/blob/master/Prologue/Prologue.ipynb) There are of course a whole plethora on books that only cover specific subjects, as well as many books about surrounding fields in Math. A very good list has been collected by /u/ilsunil here ### Deep Learning Resources ### * Karpathy's Stanford CS231n: Convolutional Neural Networks for Visual Recognition (Lecture Notes) [http://cs231n.github.io/](http://cs231n.github.io/) * Video Lecture's Stanford CS231n: Convolutional Neural Networks for Visual Recognition [https://www.youtube.com/playlist?list=PLlJy-eBtNFt6EuMxFYRiNRS07MCWN5UIA](https://www.youtube.com/playlist?list=PLlJy-eBtNFt6EuMxFYRiNRS07MCWN5UIA) * Silver's Reinforcement Learning Lectures [https://www.youtube.com/watch?v=2pWv7GOvuf0](https://www.youtube.com/watch?v=2pWv7GOvuf0) * Colah's Informational Blog [http://colah.github.io/](http://colah.github.io/) * Bruna's UC Berkeley Stat212b: Topics Course on Deep Learning [https://joanbruna.github.io/stat212b/](https://joanbruna.github.io/stat212b/) * Overview of Neural Network Architectures [http://www.asimovinstitute.org/neural-network-zoo/](http://www.asimovinstitute.org/neural-network-zoo/) ### Math Resources ### * Strang's Linear Algebra Lectures [https://www.youtube.com/watch?v=ZK3O402wf1c](https://www.youtube.com/watch?v=ZK3O402wf1c) * Kolter/Do's Linear Algebra Review and Reference Notes [http://cs229.stanford.edu/section/cs229-linalg.pdf](http://cs229.stanford.edu/section/cs229-linalg.pdf) * Calculus 1 [https://www.edx.org/course/calculus-1a-differentiation-mitx-18-01-1x](https://www.edx.org/course/calculus-1a-differentiation-mitx-18-01-1x) * Introduction to Probability [https://www.edx.org/course/introduction-probability-science-mitx-6-041x-1](https://www.edx.org/course/introduction-probability-science-mitx-6-041x-1) ### Programming Languages and Software ### In general, the most used languages in ML are probably Python, R and Matlab (with the latter losing more and more ground to the former two). Which one suits you better depends wholy on your personal taste. For R, a lot of functionality is either already in the standard library or can be found through various packages in CRAN. For Python, NumPy/SciPy are a must. From there, Scikit-Learn covers a broad range of ML methods. If you just want to play around a bit and don't do much programming yourself then things like Visions of Chaos, WEKA, KNIME or RapidMiner might be of your liking. Word of caution: a lot of people in this subreddit are very critical of WEKA, so even though it's listed here, it is probably not a good tool to do anything more than just playing around a bit. A more detailed discussion can be found here ### Deep Learning Software, GPU's and Examples ### There are a number of modern deep learning toolkits you can utilize to implement your models. Below, you will find some of the more popular toolkits. This is by no means an exhaustive list. Generally speaking, you should utilize whatever GPU has the most memory, highest clock speed, and most CUDA cores available to you. This was the NVIDIA Titan X from the previous generation. These frameworks are all very close in computation speed, so you should choose the one you prefer in terms of syntax. **Theano** is a python based deep learning toolkit developed by the Montreal Institute of Learning Algorithms, a cutting edge deep learning academic research center and home of many users of this forum. This has a large number of tutorials ranging from beginner to cutting edge research. **Torch** is a Luajit based scientific computing framework developed by Facebook Artificial Intelligence Research (FAIR) and is also in use at Twitter Cortex. There is the torch blog which contains examples of the torch framework in action. **TensorFlow** is a python deep learning framework developed by Google Brain and in use at Google Brain and Deepmind. The newest framework around. Some TensorFlow examples may be found here Do not ask questions on the Google Groups, ask them on stackoverflow **Neon** is a python based deep learning framework built around a custom and highly performant CUDA compiler Maxas by NervanaSys. **Caffe** is an easy to use, beginner friendly deep learning framework. It provides many pretrained models and is built around a protobuf format of implementing neural networks. **Keras** can be used to wrap Theano or TensorFlow for ease of use. ### Datasets and Challenges for Beginners ### There are a lot of good datasets here to try out your new Machine Learning skills. * Kaggle has a lot of challenges to sink your teeth into. Some even offer prize money! [http://www.kaggle.com/](http://www.kaggle.com/) * The UCI Machine Learning Repository is a collection of a lot of good datasets [http://archive.ics.uci.edu/ml/](http://archive.ics.uci.edu/ml/) * [http://blog.mortardata.com/post/67652898761/6-dataset-lists-curated-by-data-scientists](http://blog.mortardata.com/post/67652898761/6-dataset-lists-curated-by-data-scientists) lists some more datasets * Here is a very extensive list of large-scale datasets of all kinds. [http://www.quora.com/Data/Where-can-I-find-large-datasets-open-to-the-public](http://www.quora.com/Data/Where-can-I-find-large-datasets-open-to-the-public) * Another dataset list [http://www.datawrangling.com/some-datasets-available-on-the-web](http://www.datawrangling.com/some-datasets-available-on-the-web) ### Research Oriented Datasets ### In many papers, you will find a few datasets are the most common. Below, you can find the links to some of them. * MNIST A short handwriting dataset that is often used as a sanity check in modern research [http://yann.lecun.com/exdb/mnist/](http://yann.lecun.com/exdb/mnist/) * SVHN Similar to MNIST, but with color numbers. A sanity check in most cases. [http://ufldl.stanford.edu/housenumbers/](http://ufldl.stanford.edu/housenumbers/) * CIFAR-10/0 CIFAR 10 and 100 are two natural color images that are often used with convolutional neural networks for image classification. [https://www.cs.toronto.edu/%7Ekriz/cifar.html](https://www.cs.toronto.edu/%7Ekriz/cifar.html) ### Communities ### * [http://www.datatau.com/](http://www.datatau.com/) is a data-science centric hackernews * [http://metaoptimize.com/qa/](http://metaoptimize.com/qa/) and [http://stats.stackexchange.com/](http://stats.stackexchange.com/) are Stackoverflow-like discussion forums ### ML Research ### Machine Learning is a very active field of research. The two most prominent conferences are without a doubt NIPS and ICML. Both sites contain the pdf-version of the papers accepted there, they're a great way to catch up on the most up-to-date research in the field. Other very good conferences include UAI (general AI), COLT (covers theoretical aspects) and AISTATS. Good journals for ML papers are the Journal of Machine Learning Research, the Journal of Machine Learning and arxiv. ### Other sites and Tutorials ### * [http://datasciencemasters.org/](http://datasciencemasters.org/) is an extensive list of lectures and textbooks for a whole Data Science curriculum * [http://deeplearning.net/](http://deeplearning.net/) * [http://en.wikipedia.org/wiki/Machine\_learning](http://en.wikipedia.org/wiki/Machine_learning) * [http://videolectures.net/Top/Computer\_Science/Machine\_Learning/](http://videolectures.net/Top/Computer_Science/Machine_Learning/) ### FAQ ### * How much Math/Stats should I know? That depends on how deep you want to go. For a first exposure (e.g. Ng's Coursera class) you won't need much math, but in order to understand how the methods really work,having at least an undergrad level of Statistics, Linear Algebra and Optimization won't hurt.

Jail for everyone!

Now

Now

Deliverator

Deliverator@ kbin.socialTales from the Terrordrome